DeepEval review in one sentence — it is the most SDET-friendly LLM testing framework available in 2026 and the closest thing to a pytest for AI applications that actually works in production CI/CD pipelines.

I tested DeepEval hands-on, evaluated its metrics against real LLM outputs, and compared it honestly against RAGAS and Braintrust. This review covers everything a QA engineer or SDET needs to decide whether DeepEval belongs in their stack.

Table of Contents

What Is DeepEval?

DeepEval is an open-source Python framework for testing LLM applications. It was built by the team at Confident AI and is often described as “Pytest for LLMs” — and that analogy is accurate.

If you already write PyTest-based automation, DeepEval feels immediately familiar. You write test cases, define metrics, set thresholds, and run evaluations. Pass or fail. The difference is that instead of asserting exact string matches, you are asserting semantic quality scores.

This DeepEval review covers the open-source framework specifically. Confident AI is the commercial cloud platform built on top — more on that distinction in the pricing section.

For context on why LLM testing requires a completely different approach from traditional automation, read our guide to testing LLM applications.

Who Is DeepEval Best For?

Before diving deeper into this DeepEval review, here is the honest “who should use this” summary:

DeepEval is ideal for:

- SDETs and QA engineers with Python automation experience

- Teams building RAG-based applications need faithfulness testing

- Engineers who want LLM quality gates inside existing GitHub Actions pipelines

- Developers building AI agents need multi-turn conversation evaluation

- Teams wanting zero-cost local evaluation using Ollama

DeepEval is not ideal for:

- Teams without Python knowledge — the learning curve requires PyTest familiarity

- Projects needing academic research-grade RAG metrics — RAGAS has an edge there

- Very large evaluation suites without async batching — pipeline timeout risk is real

- Teams expecting a no-code dashboard on the free tier — that requires the Confident AI paid plan

DeepEval vs RAGAS vs Braintrust — Quick Comparison

| Feature | DeepEval | RAGAS | Braintrust |

|---|---|---|---|

| Developer Experience | ⭐⭐⭐⭐⭐ Excellent | ⭐⭐⭐ Moderate | ⭐⭐⭐⭐ Good |

| Pytest Integration | ✅ Native | ❌ Manual setup | ❌ Manual setup |

| CI/CD Integration | ✅ Built-in | ⚠️ Extra config | ✅ Available |

| RAG Metrics | ✅ Strong | ✅ Best in class | ⚠️ Limited |

| Agent Testing | ✅ 2026 update | ❌ Limited | ✅ Available |

| Local LLM Support | ✅ Via Ollama | ⚠️ Limited | ❌ Cloud only |

| Free Tier | ✅ Full open source | ✅ Open source | ⚠️ Limited |

| Synthetic Data Gen | ✅ Built-in | ❌ No | ⚠️ Limited |

| Best For | SDETs and CI/CD | Academic RAG research | Enterprise teams |

Verdict — DeepEval wins on developer experience and CI/CD integration. RAGAS wins on academic RAG research depth. Braintrust wins for large enterprise team collaboration. For most SDETs doing this DeepEval review, DeepEval is the right starting point.

DeepEval Review — Key Features in 2026

PyTest-Native Architecture

This is DeepEval’s defining advantage for SDETs. Your entire evaluation suite uses the same syntax, fixtures, and runner you already use for Python automation.

from deepeval import assert_test

from deepeval.metrics import (

AnswerRelevancyMetric,

FaithfulnessMetric,

HallucinationMetric

)

from deepeval.test_case import LLMTestCase

def test_customer_support_bot():

test_case = LLMTestCase(

input="What is your return policy?",

actual_output="We accept returns within 30 days of purchase.",

expected_output="Returns accepted within 30 days.",

retrieval_context=[

"Our return policy allows customers to return items within 30 days."

]

)

assert_test(test_case, [

AnswerRelevancyMetric(threshold=0.7),

FaithfulnessMetric(threshold=0.8),

HallucinationMetric(threshold=0.2)

])Run this with deepeval test run test_suite.py — identical to pytest. The familiarity is genuine and immediately productive for any SDET already writing Python automation.

The Core Metrics — What DeepEval Actually Measures

DeepEval covers three categories of metrics in 2026:

RAG and Retrieval Metrics:

- Faithfulness — Is the answer grounded in the retrieved context or hallucinated?

- Answer Relevancy — Does the response actually address what was asked?

- Contextual Precision — Did the retrieval system fetch the right documents?

- Contextual Recall — Did it fetch ALL the relevant documents?

- Contextual Relevancy — Are the retrieved chunks actually useful?

Generation Metrics:

- Hallucination — Did the model fabricate facts not present in context?

- Answer Correctness — Does the response match the ground truth?

- G-Eval — Custom metric using natural language criteria for subjective quality

Agentic Metrics (Major 2026 Addition):

- Task Completion — Did the agent complete the assigned goal?

- Tool Correctness — Did it call the right tools in the right order?

- Step Efficiency — Did it complete the task without unnecessary steps?

G-Eval — The Most Flexible Metric

G-Eval deserves special attention in this DeepEval review because it is genuinely powerful for SDETs who need to test subjective criteria.

Traditional metrics test for factual accuracy. G-Eval lets you define custom metrics in plain English:

from deepeval.metrics import GEval

from deepeval.test_case import LLMTestCaseParams

tone_metric = GEval(

name="Professional Tone",

criteria="The response should be professional, concise and avoid casual language",

evaluation_params=[LLMTestCaseParams.ACTUAL_OUTPUT],

threshold=0.7

)This is the DeepEval review equivalent of writing a custom assertion — you define what “good” means in natural language, and DeepEval uses LLM-as-a-Judge to score it.

Important caveat — G-Eval scores are not the absolute truth. Independent 2026 benchmarks show that DeepEval’s statement decomposition method occasionally underscores perfect responses compared to human raters. Treat G-Eval scores as strong signals, not certainties. Implement periodic human-in-the-loop auditing for high-stakes applications.

Multi-Turn Agent Testing — The Biggest 2026 Update

Single-prompt testing was sufficient for early chatbots. In 2026, most production LLM applications are multi-turn agents — systems that maintain conversation state, call external tools, and complete multi-step tasks autonomously.

DeepEval’s 2026 agentic testing covers entire conversation sessions:

from deepeval.test_case import ConversationalTestCase, LLMTestCase

from deepeval.metrics import ConversationCompletenessMetric

conversation = ConversationalTestCase(

turns=[

LLMTestCase(

input="Book me a flight to London",

actual_output="I found 3 flights. Which date works for you?"

),

LLMTestCase(

input="March 15th please",

actual_output="Flight confirmed for March 15th. Confirmation sent."

)

]

)

metric = ConversationCompletenessMetric(threshold=0.8)

assert_test(conversation, [metric])This tests whether the agent completed the user’s goal across the entire conversation — not just whether individual responses were accurate.

CI/CD Integration — DeepEval as a Quality Gate

This is where DeepEval review score highest for SDETs. Blocking a deployment when LLM quality drops is the core use case, and DeepEval makes it straightforward.

# .github/workflows/llm-quality-gate.yml

name: LLM Quality Gate

on: [push, pull_request]

jobs:

deepeval:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

- name: Set up Python

uses: actions/setup-python@v4

with:

python-version: '3.11'

- name: Install DeepEval

run: pip install deepeval

- name: Run evaluations

run: deepeval test run tests/test_llm_quality.py

env:

OPENAI_API_KEY: ${{ secrets.OPENAI_API_KEY }}

timeout-minutes: 15Critical note — the timeout. LLM evaluations are significantly slower than traditional unit tests. A suite of 100 DeepEval tests using GPT-4o as a judge takes 5 to 10 minutes versus seconds for Selenium or API tests. Always set explicit timeout limits in your pipeline configuration, or you risk pipeline hangs.

Running DeepEval Locally with Ollama — Zero API Cost

This is the feature most DeepEval review articles completely ignore. If you are a freelancer, working on a personal project, or running evaluations at high volume — using Ollama with a local model eliminates API costs entirely.

from deepeval.models import OllamaModel

# Use local Llama 3.1 as your judge instead of GPT-4

local_judge = OllamaModel(model="llama3.1")

metric = AnswerRelevancyMetric(

threshold=0.7,

model=local_judge

)Running 500 DeepEval evaluations using GPT-4o costs approximately $2.50 to $5.00, depending on prompt length. The same run using local Llama 3.1 via Ollama costs $0. For CI/CD pipelines running on every pull request, that cost difference compounds significantly over a month.

DeepEval Pricing — Open Source vs Confident AI

This is the most confusing aspect of DeepEval, and most reviews blur the line deliberately.

| Tier | Cost | What You Get |

|---|---|---|

| DeepEval Open Source | Free forever | Full evaluation framework, all metrics, CLI runner, local execution |

| Confident AI Free | Free | Basic cloud dashboard, limited dataset storage |

| Confident AI Starter | $19/month | Team dashboards, versioned datasets, shared test suites |

| Confident AI Pro | Custom pricing | Enterprise observability, SSO, priority support |

Pricing is subject to change — always check the official website at confident-ai.com for current rates.

The honest answer — the open-source DeepEval framework gives you everything you need for CI/CD integration, all metrics, and local execution at zero cost. The Confident AI paid tiers add team collaboration features and cloud dashboards. For individual SDETs and small teams, the free open-source version is completely sufficient.

DeepEval Pros and Cons

In this DeepEval review, here are the real pros and cons based on practical usage:

Pros:

- PyTest-native — zero learning curve for Python SDETs

- Full open-source — no vendor lock-in for core functionality

- CI/CD integration is genuinely straightforward

- Local LLM support via Ollama eliminates API costs

- Multi-turn agent testing is the best available in 2026

- Synthetic dataset generation is built in

- G-Eval enables custom subjective metrics in plain English

- Active development — major updates shipped in early 2026

Cons:

- LLM evaluations are slow — pipeline timeout management required

- G-Eval scores are not perfectly calibrated — periodic human auditing needed

- Confident AI free tier dashboard is limited — team features require a paid plan

- RAGAS has stronger academic RAG metrics for research-grade applications

- Requires OpenAI API key or local Ollama setup — not zero-dependency

- Large evaluation suites need async batching to avoid timeouts

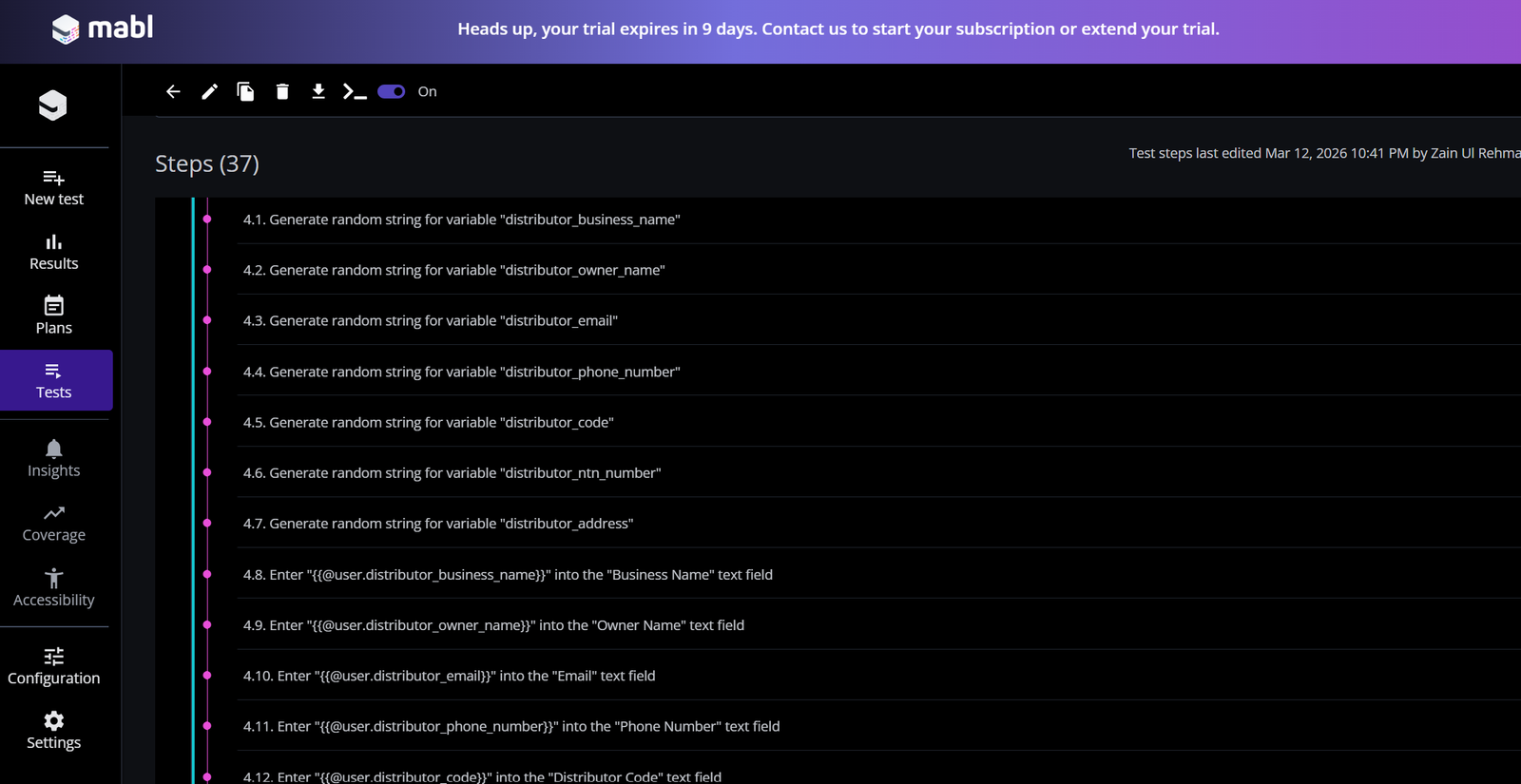

How Traditional SDETs Transition to DeepEval

This is the angle missing from every other DeepEval review — the direct mapping from what you already know to what DeepEval requires.

| Traditional SDET Concept | DeepEval Equivalent |

|---|---|

| Test assertion (assertEqual) | Metric threshold (threshold=0.7) |

| Expected value | Ground truth / expected output |

| Test fixture | LLMTestCase object |

| Test suite | DeepEval test file |

| CI/CD pipeline step | deepeval test run command |

| Pass/fail result | Score above or below threshold |

| Custom assertion | G-Eval metric |

| Regression suite | Golden dataset evaluation run |

If you already understand how PyTest fixtures and assertions work from building Selenium or API test frameworks, the transition to DeepEval takes days — not weeks.

For the framework design principles that transfer directly, read our best Selenium frameworks guide. For the full QA to AI testing career path, read our QA to SDET transition guide.

DeepEval in Your Full-Stack Testing Architecture

DeepEval does not replace your existing test stack. It adds the LLM evaluation layer to it.

A complete full-stack AI application test architecture in 2026 looks like this:

Layer 1 — UI Tests: Playwright or Selenium verifies that LLM responses render correctly in the frontend interface. Read our Selenium vs Playwright comparison for the right tool choice.

Layer 2 — API Tests: Traditional API testing verifies response times, status codes, and rate limiting on your LLM endpoints. Our best API testing tools guide covers the right tools for this layer.

Layer 3 — LLM Evaluation: DeepEval asserts semantic quality — faithfulness, relevancy, hallucination rate — using LLM-as-a-Judge scoring.

Layer 4 — Security: Promptfoo red teaming for prompt injection and data leakage vulnerabilities.

All four layers run in the same GitHub Actions pipeline. All four must pass for a deployment to proceed.

Disclosure: This article contains affiliate links. If you purchase through these links, I earn a small commission at no extra cost to you.

To build the Python and PyTest foundation that makes DeepEval immediately accessible, the Selenium Python Automation course on Udemy covers the core framework design skills that transfer directly to LLM evaluation engineering. Rated 4.6 stars.

Final Thoughts on This DeepEval Review

DeepEval is the right tool for SDETs entering AI testing in 2026. The PyTest-native architecture removes the biggest barrier — you do not need to learn a new testing paradigm from scratch. You extend the one you already know.

The limitations are real but manageable. Pipeline timeouts require explicit configuration. G-Eval scores need periodic human validation. Large evaluation suites need async batching. None of these are dealbreakers — they are engineering problems with engineering solutions.

For the DeepEval review verdict — if you are a Python automation engineer building or testing LLM applications, DeepEval belongs in your stack. If you are doing academic RAG research, add RAGAS alongside it. If you need enterprise team dashboards, budget for Confident AI Starter at $19/month.

The engineers who are proficient in DeepEval today are exactly who companies are trying to hire in 2026. The supply of qualified AI testing engineers is far smaller than the demand. Add this to your portfolio now.

For the complete LLM testing picture beyond DeepEval, read our how to test LLM applications guide. To see how this fits your broader SDET career, read our SDET salary guide for the compensation data on AI testing specialisations.

Frequently Asked Questions

What is DeepEval, and how does it work for LLM testing in 2026?

DeepEval is an open-source Python framework for evaluating LLM application outputs. It works by running test cases through configurable metrics — Faithfulness, Answer Relevancy, Hallucination, and more — using LLM-as-a-Judge scoring. It integrates natively with PyTest and GitHub Actions, making it immediately familiar to SDETs with Python automation experience.

How do you use DeepEval for automated LLM evaluation step by step?

Install DeepEval with pip install deepeval. Create an LLMTestCase with your input, actual output, and retrieval context. Define metrics with quality thresholds. Run deepeval test run your_test_file.py. The suite passes if all metric scores exceed their thresholds and fails if any drop below — blocking deployment automatically.

Is DeepEval worth it for QA engineers and SDETs in 2026?

Yes. The PyTest-native architecture means the learning curve is minimal for Python automation engineers. The open-source free tier covers all core evaluation needs. CI/CD integration is genuinely straightforward. For SDETs moving into AI testing, DeepEval is the most practical entry point available in 2026.

How does DeepEval compare to RAGAS for RAG evaluation?

DeepEval wins on developer experience, CI/CD integration, and agent testing. RAGAS wins on academic RAG research depth and is more widely cited in research papers. Most production engineering teams use DeepEval for pipeline integration and add RAGAS metrics for deeper RAG analysis. They are complementary rather than mutually exclusive.

What metrics does DeepEval use to evaluate LLM responses?

DeepEval covers three metric categories. RAG metrics include Faithfulness, Answer Relevancy, Contextual Precision, and Contextual Recall. Generation metrics include Hallucination, Answer Correctness, and G-Eval for custom criteria. Agentic metrics include Task Completion, Tool Correctness, and Step Efficiency for multi-turn agent evaluation.

Can DeepEval be integrated into CI/CD pipelines for AI testing?

Yes — this is one of DeepEval’s strongest features. It integrates directly with GitHub Actions, GitLab CI, and Jenkins using the deepeval test run command. Set explicit timeouts of 10 to 15 minutes to prevent pipeline hangs from slow LLM evaluation calls. Use async batching for large test suites.

How much does DeepEval cost, and is there a free tier?

The core DeepEval open-source framework is completely free. It covers all metrics, CI/CD integration, and local execution with no limitations. Confident AI — the commercial cloud platform — starts at $19/month for team dashboards and versioned datasets. Most individual SDETs and small teams need only the free open-source version.

What are the main limitations of DeepEval for production testing?

Three limitations matter most. First — evaluation speed. LLM evaluations take minutes, not seconds, requiring pipeline timeout management. Second — G-Eval calibration. LLM-as-a-Judge scoring occasionally deviates from human rater scores, requiring periodic human-in-the-loop auditing. Third — API dependency. You need either an OpenAI API key or a local Ollama setup to run evaluations.

Does learning DeepEval improve career opportunities for SDETs in 2026?

Significantly. Companies building LLM products urgently need engineers who understand both traditional automation and AI evaluation. DeepEval proficiency combined with existing Selenium or Playwright framework skills creates a skill combination that very few candidates currently have. Check our SDET salary guide for compensation data on AI testing specialisations.