If you are a QA engineer or SDET wondering how to test LLM applications in 2026, this guide gives you a practical, engineering-focused blueprint. Not a vendor pitch. Not a data science tutorial. A real automation engineer’s approach to AI testing.

Testing LLM applications breaks almost every assumption that traditional automation is built on. The techniques that work for Selenium, API testing, and CI/CD pipelines need to evolve when the system under test is non-deterministic by design. This guide shows you exactly how to make that evolution.

The demand for engineers who know how to test LLM applications is growing faster than the supply of qualified candidates.

Table of Contents

Why Traditional Testing Fails for LLM Applications

Before diving into how to test LLM applications, you need to understand why your existing toolkit is insufficient on its own.

In traditional automation, you write assertions like this:

# Traditional deterministic test

assert response == "Your order has been confirmed."This works because the same input always produces the same output. Software is deterministic.

LLMs are not deterministic. Ask the same question twice, and you get two different but equally valid answers. Your traditional assertion fails on the second run — not because the application is broken, but because the output varied naturally.

This is the core challenge to test LLM applications in 2026. You cannot test for exact outputs. You must test for meaning, quality, accuracy, and safety — using probabilistic scoring rather than binary pass/fail.

This shift from deterministic to probabilistic testing is the most important mindset change for any SDET moving into AI quality engineering. We covered the broader career transition in our QA to SDET guide — LLM testing is the next frontier of that journey.

The 3 Pillars of LLM Testing Every QA Engineer Must Know

Every effective LLM test framework is built on three core components. Every engineer involved in LLM application testing must understand them first.

Pillar 1 — Golden Datasets: A curated, version-controlled collection of input and expected output pairs. This is your ground truth. When you run evaluations, you compare actual LLM outputs against this dataset. Building and maintaining a quality golden dataset is harder than most articles admit — we cover this in detail later.

Pillar 2 — Experiment Runners: The execution engine that takes your golden dataset, sends each input to the LLM, collects responses, and passes them to the evaluator. Tools like DeepEval, Promptfoo, and RAGAS serve this role.

Pillar 3 — Evaluators: The scoring function that grades each LLM response. This is where LLM-as-a-Judge comes in — using a powerful model like GPT-4 or Claude to grade the outputs of your application model against your expected results.

Together, these three pillars form the evaluation pipeline that replaces your traditional assertion-based test suite.

The LLM Testing Hierarchy — Unit, Functional, Regression

Just like traditional automation has the test pyramid, LLM testing has its own hierarchy that gives you a structured way to test LLM applications at every level of complexity.

Unit Evals — Single Prompt Testing: Testing one prompt, one response, one evaluation. You are checking that a specific input produces an output meeting your quality threshold. Fast, cheap, and run on every pull request.

Example: “Does this customer service prompt always respond politely, regardless of how aggressive the user message is?”

Functional Evals — RAG Pipeline and Agent Testing Testing the full pipeline — retrieval, augmentation, and generation together. You are verifying that the right data was retrieved from your database before the LLM generated its response. This requires specific metrics like Context Precision and Context Recall.

Regression Evals — Golden Dataset Runs: Running your full golden dataset before every release. This is your LLM equivalent of a full regression suite. If your hallucination rate increases or your faithfulness score drops below threshold — the build fails.

For a deeper understanding of how this testing hierarchy fits into CI/CD pipelines, read our JMeter vs k6 comparison, which covers pipeline integration patterns that apply directly to LLM eval workflows.

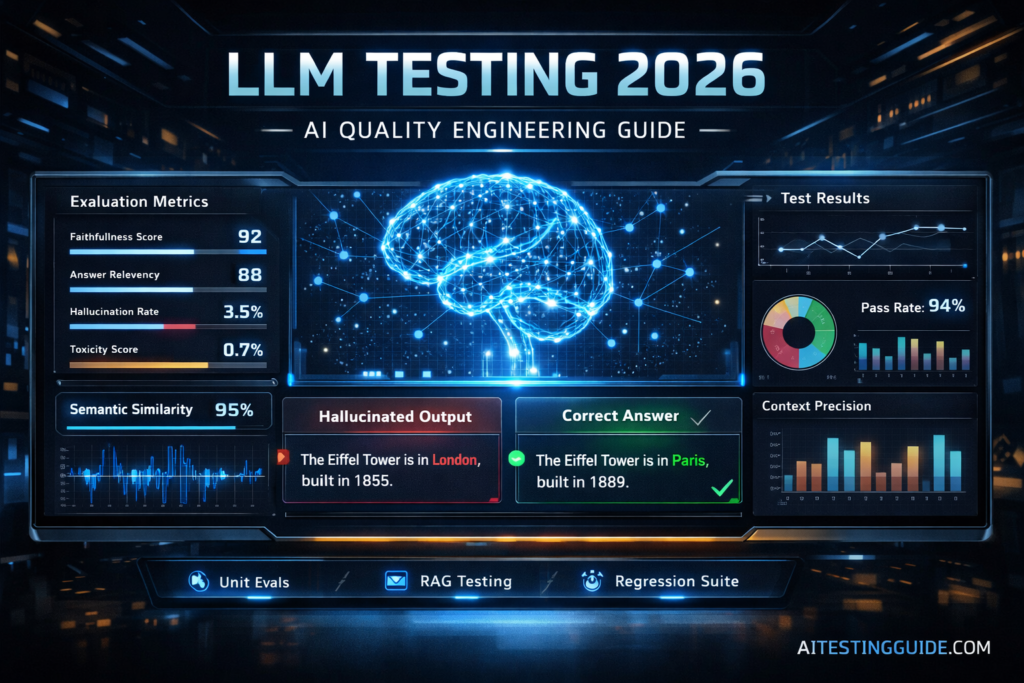

The Core LLM Testing Metrics You Must Understand

To effectively test LLM applications, you need to understand the key evaluation metrics used across different testing layers.

| Metric | What It Measures | Testing Layer | Tool |

|---|---|---|---|

| Faithfulness | Does the answer come from the context or is it hallucinated? | RAG pipeline | RAGAS, DeepEval |

| Answer Relevancy | Does the response actually answer the question asked? | All layers | DeepEval, Promptfoo |

| Context Precision | Did the retrieval system fetch the right documents? | RAG retrieval | RAGAS |

| Context Recall | Did the retrieval system fetch all relevant documents? | RAG retrieval | RAGAS |

| Hallucination Rate | How often does the model generate false information? | All layers | DeepEval, Giskard |

| Toxicity Score | Does the output contain harmful or inappropriate content? | Safety layer | Giskard, Promptfoo |

| Semantic Similarity | Does the meaning of the output match the expected answer? | All layers | DeepEval, custom |

| LLM-as-a-Judge Score | How does a stronger model rate this response overall? | All layers | DeepEval, Promptfoo |

The Best Tools for Testing LLM Applications in 2026

Here is an honest, vendor-agnostic breakdown of the tools that actually matter. Not a list of whichever tool paid for the most blog posts.

DeepEval — Best for SDETs and CI/CD Integration

DeepEval is the most important tool to test LLM applications for QA engineers and SDETs in 2026. It is built on top of PyTest, which means if you already know Python automation, the learning curve is almost zero.

Here is a basic DeepEval test that any SDET can run immediately:

from deepeval import assert_test

from deepeval.metrics import AnswerRelevancyMetric

from deepeval.test_case import LLMTestCase

def test_customer_support_response():

test_case = LLMTestCase(

input="What is your refund policy?",

actual_output="Our refund policy allows returns within 30 days.",

expected_output="Customers can return items within 30 days for a full refund."

)

metric = AnswerRelevancyMetric(threshold=0.7)

assert_test(test_case, [metric])This test fails automatically if the answer relevance score drops below 0.7. That threshold becomes your quality gate in CI/CD.

DeepEval integrates directly into GitHub Actions. Add this to your workflow:

name: LLM Evaluation Tests

on: [push, pull_request]

jobs:

llm-evals:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

- name: Set up Python

uses: actions/setup-python@v4

with:

python-version: '3.11'

- name: Install DeepEval

run: pip install deepeval

- name: Run LLM evaluations

run: deepeval test run test_llm_evals.py

env:

OPENAI_API_KEY: ${{ secrets.OPENAI_API_KEY }}Now your build fails automatically if your LLM starts hallucinating or degrading in quality. That is LLM testing treated as real engineering — not a science experiment.

RAGAS — Best for RAG Pipeline Testing

RAGAS is the industry standard for testing Retrieval-Augmented Generation pipelines. If your application combines an LLM with a document database, vector store, or knowledge base — RAGAS gives you the specific metrics to test the retrieval layer independently from the generation layer.

from ragas import evaluate

from ragas.metrics import faithfulness, answer_relevancy, context_precision

from datasets import Dataset

test_data = {

"question": ["What is our refund policy?"],

"answer": ["Returns are accepted within 30 days."],

"contexts": [["Our policy allows 30-day returns for all products."]],

"ground_truth": ["Customers can return items within 30 days."]

}

dataset = Dataset.from_dict(test_data)

results = evaluate(dataset, metrics=[faithfulness, answer_relevancy, context_precision])

print(results)RAGAS scores each metric between 0 and 1. Set your thresholds — anything below 0.75 on faithfulness means your RAG pipeline is hallucinating, and the build should fail.

Promptfoo — Best for Red Teaming and Multi-Model Testing

Promptfoo is a CLI-driven tool that excels at two things: testing prompt variations across multiple models simultaneously, and automated red teaming for security vulnerabilities.

Red teaming means systematically attacking your LLM with adversarial inputs — prompt injection attempts, jailbreak attempts, and edge cases that might bypass your safety filters. Promptfoo automates this entire process.

For SDET engineers familiar with security testing concepts from tools like OWASP ZAP, Promptfoo’s red teaming feels immediately familiar — it is penetration testing applied to the AI layer.

Langfuse — Best for Production Observability

Langfuse bridges pre-production testing and post-production monitoring. While DeepEval runs before release, Langfuse watches your LLM application in production — tracing every request, logging every response, and alerting you when quality degrades.

This is the LLM equivalent of application performance monitoring. When a user reports a bad response, Langfuse traces it back to the exact prompt template, retrieval query, or model version that caused it.

Building an Enterprise LLM Test Framework — The Full Blueprint

This blueprint gives you everything needed to test LLM applications at enterprise scale. Here is how to build a complete, maintainable LLM test framework from scratch as an SDET.

Step 1 — Build Your Golden Dataset

Your golden dataset is your most valuable asset. Here is how to build one properly:

Source your data from real usage. The best golden datasets come from real production queries — anonymised and labelled. If you are building a new application, create representative examples covering normal cases, edge cases, and adversarial inputs.

Version control your dataset. Store your golden dataset in Git alongside your test code. Use JSON or CSV with a clear schema:

[

{

"id": "001",

"input": "What is the refund policy?",

"expected_output": "30-day return policy",

"context": "Our refund policy document",

"tags": ["policy", "customer_service"],

"version": "1.0"

}

]Update it when business logic changes. A golden dataset that does not evolve with your product becomes misleading. Treat dataset updates with the same code review process as framework changes.

Step 2 — Define Your Quality Thresholds

Before writing a single test, define what “good enough” means for your application. These thresholds become your quality gates.

Recommended starting thresholds for most production LLM applications:

- Faithfulness: minimum 0.75 — anything below means hallucination risk

- Answer Relevancy: minimum 0.70 — responses must address the question

- Context Precision: minimum 0.65 — retrieval system must fetch relevant docs

- Toxicity: maximum 0.10 — safety filter must catch harmful outputs

These are starting points. Adjust based on your application’s risk profile. A medical information chatbot needs faithfulness above 0.90. A creative writing assistant can tolerate more variation.

Step 3 — Structure Your Test Framework

llm-test-framework/

├── datasets/

│ ├── golden_dataset_v1.json

│ └── adversarial_prompts.json

├── tests/

│ ├── test_answer_relevancy.py

│ ├── test_faithfulness.py

│ ├── test_rag_pipeline.py

│ └── test_security_redteam.py

├── config/

│ └── thresholds.yaml

├── .github/

│ └── workflows/

│ └── llm_evals.yml

├── requirements.txt

└── README.mdThis structure separates your datasets from your tests and your configuration from your code. Any SDET familiar with POM framework design will recognise this pattern immediately — the same separation of concerns principles apply.

For the full framework design principles that transfer from traditional to AI testing, read our best Selenium frameworks guide.

Step 4 — Integrate Full-Stack Testing

This is what nobody else covers. Real LLM applications have multiple layers, and each layer needs testing independently.

Layer 1 — UI Layer When your LLM generates a response, does it display correctly in the frontend? Long responses can break UI layouts. Markdown formatting can render incorrectly. Use Playwright or Selenium to verify that LLM outputs render correctly in your interface.

python

# Playwright test verifying LLM response renders correctly

def test_llm_response_ui_rendering(page):

page.goto("https://your-app.com/chat")

page.fill("#chat-input", "What is your refund policy?")

page.click("#send-button")

response_element = page.locator(".chat-response")

expect(response_element).to_be_visible()

expect(response_element).not_to_have_text("")

# Verify response fits within UI container

assert response_element.bounding_box()["height"] < 500Layer 2 — API Layer Test your LLM API endpoints directly — response time, token usage, rate limiting, and error handling. A response that is semantically correct but takes 30 seconds to arrive is still a failed test. Use your existing API testing skills here — our best API testing tools guide covers the tools that work directly for this layer.

Layer 3 — LLM Evaluation Layer DeepEval and RAGAS evaluate the semantic quality of LLM responses — faithfulness, relevancy, and hallucination rate.

Layer 4 — Security Layer Promptfoo red teaming for prompt injection, jailbreaks, and data leakage.

Temporal Drift — The LLM Testing Problem Nobody Talks About

Temporal drift is one of the hardest problems when you test LLM applications in production. It occurs when model output quality gradually degrades over time without any code changes.

This happens because LLM providers regularly update their models. A prompt that scored 0.85 faithfulness in January might score 0.70 in March after the provider updated their base model.

Your CI/CD pipeline catches regressions at deployment time. But temporal drift happens between deployments. The solution is continuous evaluation — automatically sampling production traffic daily, running it through your evaluation pipeline, and alerting when scores drop below the threshold.

Langfuse handles this automatically in production. For pre-production environments, schedule your DeepEval test suite to run daily — not just on deployment.

LLM Testing for Your SDET Career — Why This Matters Now

This is the career angle nobody in the LLM testing space is writing about.

Companies are rapidly building LLM-powered products and urgently need engineers who know how to test LLM applications properly. Traditional data scientists do not know how to build CI/CD integrated evaluation pipelines. Traditional SDETs do not yet know the LLM evaluation tools.

The engineer who bridges both worlds — who can build a DeepEval test suite, integrate it into GitHub Actions, combine it with Playwright UI tests, and present it all in a clean GitHub repository — is genuinely rare in 2026 and commands a significant salary premium.

An LLM testing portfolio project would look like this:

- A FastAPI application with an LLM endpoint

- DeepEval test suite with faithfulness and relevancy metrics

- RAGAS evaluation for the RAG pipeline

- Playwright tests for UI rendering

- GitHub Actions runs all evaluations on every PR

- README documenting your quality thresholds and rationale

This single portfolio project demonstrates more advanced SDET skills than most candidates show across their entire resume. Combined with the traditional framework skills from our SDET resume guide — this is the portfolio that gets you hired into AI engineering teams in 2026.

For the full learning path that leads to these skills, read our how to become an SDET guide.

Disclosure: This article contains affiliate links. If you purchase through these links, I earn a small commission at no extra cost to you.

To build the Python automation foundation needed for DeepEval and RAGAS integration, the Selenium Python Automation course on Udemy gives you the PyTest and framework design skills that transfer directly to LLM evaluation engineering. Rated 4.6 stars with hands-on projects.

Final Thoughts

Learning how to test LLM applications is the most valuable skill addition any QA engineer or SDET can make in 2026. The market for engineers who understand both traditional automation and AI evaluation is growing faster than the supply of qualified candidates.

The core shift is conceptual — from deterministic assertions to probabilistic scoring. Once you accept that LLM outputs vary naturally and build your test framework around quality thresholds instead of exact matches, everything else follows logically.

Start with DeepEval — it integrates directly with PyTest and GitHub Actions, so you are using familiar tooling from day one. Add RAGAS if your application uses RAG architecture. Add Promptfoo when you are ready to automate security testing. Combine all three with Playwright for full-stack AI testing coverage.

The engineers who master this combination now will define what SDET roles look like in 2027 and beyond. This is not a future trend — it is a present opportunity with very few people yet qualified to fill it.

Frequently Asked Questions

How do you test LLM applications in 2026 step by step?

To test LLM applications effectively, start by defining your golden dataset. Set quality thresholds for faithfulness, answer relevancy, and hallucination rate. Write DeepEval tests using PyTest. Integrate them into GitHub Actions to block deployments when quality drops. Add RAGAS for RAG pipeline testing and Promptfoo for automated red teaming.

What are the best tools for testing LLM apps for QA engineers?

DeepEval is the best starting point — it is PyTest-native and CI/CD ready. RAGAS is essential for RAG pipeline evaluation. Promptfoo handles multi-model testing and red teaming. Langfuse covers production observability. For full-stack coverage, combine these with Playwright for UI layer testing and your existing API testing tools.

How is LLM testing different from traditional software testing?

Traditional testing is deterministic — the same input always produces the same output. LLM testing is probabilistic, meaning outputs can vary naturally. When you test LLM applications, you validate responses using quality thresholds instead of exact string matches. Techniques like semantic similarity and LLM-as-a-judge scoring replace strict assertions such as assertEqual.

How do you validate accuracy and hallucinations in LLM outputs?

Use the Faithfulness metric from DeepEval or RAGAS — it scores whether the model’s answer is grounded in the provided context or hallucinated from external knowledge. Set a minimum threshold of 0.75 for most applications. Any response scoring below that threshold fails the test and blocks deployment.

What is the best framework for automating LLM testing?

DeepEval is the most SDET-friendly framework in 2026. It uses PyTest conventions, integrates with GitHub Actions, and covers all core evaluation metrics out of the box. For RAG-specific applications, add RAGAS. For security testing, add Promptfoo. The combination of all three gives you complete LLM test coverage.

How do SDETs design test cases for LLM-based applications?

Structure your test cases around the three pillars — golden dataset, experiment runner, and evaluator. Write unit evals for individual prompts, functional evals for complete RAG pipelines, and regression evals for pre-release validation. Combine these with traditional UI and API tests for full-stack coverage. Treat LLM eval thresholds with the same rigour as traditional pass/fail assertions.

What skills do QA engineers need to move into AI and LLM testing?

Python programming is essential — DeepEval and RAGAS are both Python-based. Understanding of REST APIs and CI/CD pipelines transfers directly. A basic understanding of how LLMs and RAG architectures work helps significantly. The traditional automation skills from your best Selenium frameworks background transfer more than most engineers realise.

Is learning LLM testing worth it for QA engineers in 2026?

Yes — unequivocally. Companies need engineers who can test LLM applications faster than they can hire them. The salary premium for engineers with both skill sets is significant and growing. This is the highest-value skill addition any SDET can make right now. The learning investment is 2 to 3 months to reach proficiency with DeepEval and RAGAS — the career return is measured in years.